Online Investment Scams

May 18, 2026

Online investing has never been more accessible or dangerous. But the most insidious investment scams rarely start with an obvious pitch; they usually begin with what looks like education, community...

Resources

Artificial intelligence is now embedded in hiring tools, chatbots, recommendation engines, and countless “smart” products. When AI causes business damage, financial loss, or consumer harm, the fallout can be immediate and severe.

If you believe you or your business has suffered damages from AI, contact our team today for a free consultation.

AI liability arises when an AI system or AI-driven product causes financial, reputational, emotional, or physical harm. This can include faulty recommendations, biased decisions, misuse of personal data, or dangerous interactions with AI chatbots or virtual agents.

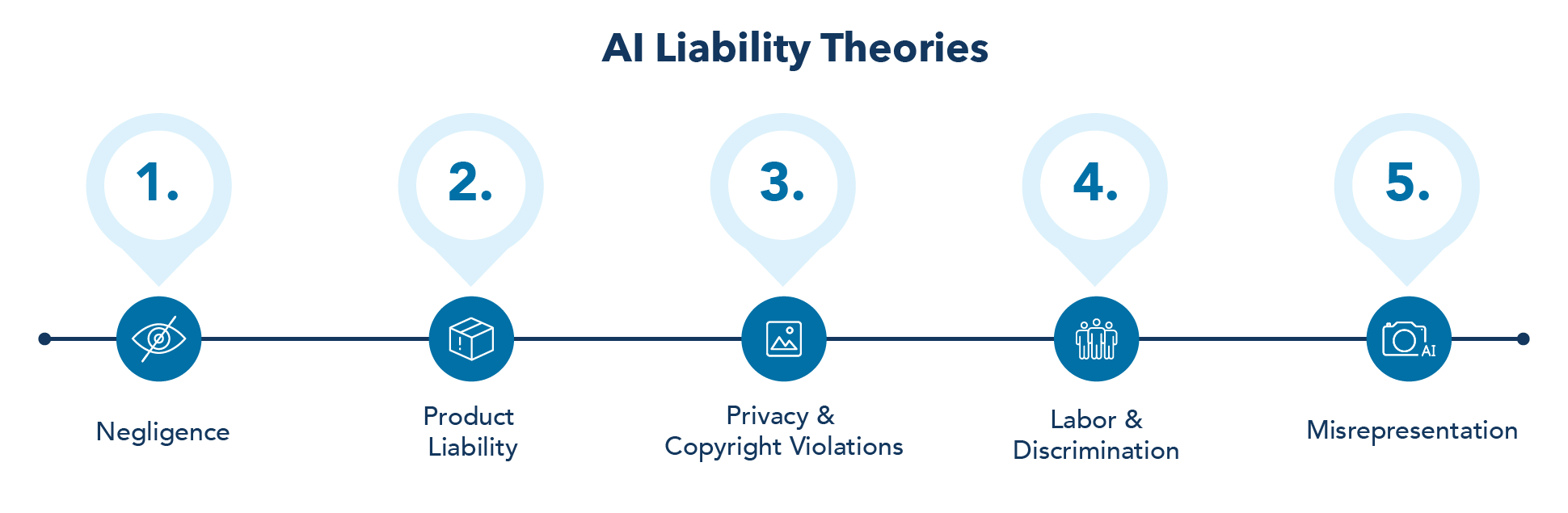

Common AI liability theories include negligence, product liability, unfair or deceptive trade practices, privacy violations, discrimination, and misrepresentation about what an AI tool can safely do. These theories are being applied to traditional software, but regulators and courts are quickly adapting them to AI-enabled tools and services.

AI tools increasingly shape what people see, buy, and do. When those tools fail, consumers can suffer serious harm, such as:

The firm helps individuals and groups of consumers pursue claims where AI products are unsafe, deceptive, or deployed without reasonable protections.

Businesses rely on AI to automate decisions, analyze data, and interact with customers. When AI malfunctions or is misrepresented, the business impact can be immediate:

The firm represents businesses that have been harmed by AI vendors, platforms, and technology partners that failed to deliver safe, lawful, and transparent AI solutions

When a few large firms control data, compute, and key AI platforms, AI can reinforce monopolies and raise antitrust concerns. Potential legal issues include:

Excessive application of AI in automation, wage suppression, and failure to improve worker productivity intersect with employment and antidiscrimination law. Legally significant harms include:

AI systems often rely on massive data collection, creating significant privacy and data protection exposure. From a legal perspective, this can involve:

AI-driven personalization can narrow consumer choice and enable manipulative design, which has consumer protection implications.

Relevant legal risks include:

AI accidents arise when AI systems act in unexpected or unsafe ways, leading to harm in everyday settings. These incidents typically blend technical failures with human mistakes, such as overreliance on automation or delayed intervention. Common patterns include:

In most of these situations, the harm is not caused by the AI system alone but by an interaction between design flaws, inadequate oversight, and human complacency or misunderstanding of the system’s limits.

Companies are also litigating situations where employees feed confidential information into external AI services, arguably destroying secrecy or transferring it to third parties.

When employees paste proprietary content (source code, design documents, pricing models, client lists) into public LLMs, the business can lose effective control over that information and jeopardize trade secret status.

LLM training and outputs can harm the economic value of an artist's s, even if courts ultimately find training to be fair use in some contexts.

Get Legal Help Now

May 18, 2026

Online investing has never been more accessible or dangerous. But the most insidious investment scams rarely start with an obvious pitch; they usually begin with what looks like education, community...

Resources

March 16, 2026

Kronenberger Rosenfeld, LLP has filed a lawsuit in San Francisco Superior Court against cryptocurrency exchange Kraken and related entities. The complaint, available here, alleges that Kraken disclosed a high-value crypto...

Announcements

February 26, 2026

See Article: https://poliscio.com/crypto-pardon-doesnt-end-troubles-for-zhao-binance/ Galen Cheney (Associate) was recently featured in PoliScio’s FOIAengine coverage of the continuing legal and regulatory fallout surrounding Binance and its founder, Changpeng “CZ” Zhao. In the...

Featured Media

October 8, 2025

Recently on Fox Business, Coinbase CEO Brian Armstrong spelled out the company’s ultimate objective: “...We want to be a bank replacement for people…We want to be their primary financial services...

Resources

September 12, 2025

As a major update in the firm’s elder financial abuse cryptocurrency lawsuit, the District Court for the Northern District of California, in a groundbreaking order in the case of Lee...

Announcements

August 13, 2025

The U.S. Senate has passed the GENIUS Act (Guiding and Establishing National Innovation for U.S. Stablecoins Act), marking a major milestone in the regulation of payment stablecoins in the United...

Resources